Get a free AI visibility report

Optimizing for AI search is not a replacement for SEO. It is the next layer on top of it, requiring a shift in how content is structured, where authority is built, and how visibility is measured. Here is what practitioners need to understand before diving in:

A content strategist at a mid-sized SaaS company runs a monthly audit. Rankings are holding. Blog traffic is stable. By every traditional metric, the content program is performing. Then a sales rep flags something: three enterprise prospects mentioned they had researched the category using ChatGPT or Perplexity before the discovery call. None of them had found the company. One had been pointed directly toward a competitor.

The content team had optimized for search engines. They had not optimized for the systems that now synthesize search results into single, direct answers.

This is the operational gap that AI search optimization closes. When a buyer asks an AI platform a question in your category, the platform does not return ten links and let the user decide. It retrieves relevant content, extracts key passages, and generates a synthesized response. If your content is not structured for that retrieval process, it does not matter how well it ranks. It will not make it into the answer.

The numbers bear out the urgency. ChatGPT now has more than 800 million weekly active users. Google's AI Overviews appear in more than a quarter of all searches. AI-referred web sessions grew over 500% between January and May 2025. These are mainstream behaviors, not experiments.

This guide covers how AI retrieval actually works, how it differs from traditional SEO, and the specific content and technical practices that improve AI visibility. Each section is written for practitioners who are already running a search program and need to know what to change, add, or stop doing.

Optimizing for AI search means structuring your content so that AI systems can retrieve, interpret, and cite it when generating answers to relevant queries. It is not primarily about ranking. It is about extractability, credibility, and clarity at the passage level, not just the page level.

The practice sits under the broader umbrella of Generative Engine Optimization (GEO), which covers everything a brand does to improve its presence in AI-generated outputs. GEO is the formal discipline: the combination of content strategy, technical structure, and earned authority that determines whether an AI system includes your brand in its synthesized responses. Answer Engine Optimization (AEO) is a closely related term that focuses more specifically on structuring content for direct answer extraction, including featured snippets and AI Overviews. Both terms describe real practices, and both are now core parts of a competitive content strategy.

The distinction from traditional SEO matters practically. SEO optimizes your pages to rank in a list. AI optimization ensures your content is usable by a system that reads across dozens of sources and produces a single response. A page that ranks third but is dense, promotional, or poorly structured will be skipped by the retrieval step entirely. A page that answers a question directly in the first paragraph, uses clean structure, and is supported by credible third-party mentions is far more likely to be extracted and cited.

Traditional search engines evaluate individual pages, score them against a set of ranking signals, and present them in a ranked list. The user decides what to click. Your job was to be in that list, ideally near the top.

AI search systems behave differently at a fundamental level. Perplexity's documentation describes their process clearly: the system searches the web, selects relevant sources, and generates synthesized responses while citing those references. The user does not see a list. They receive an answer. Your content needs to be the source that answer is drawn from.

The table below maps the practical differences for content teams:

DimensionTraditional SEOAI Search OptimizationOptimization targetRanked position in results listInclusion in synthesized answerQuery formatShort keyword strings (~4 words)Conversational questions (~23 words)Content unitFull pageExtractable passage or answer blockAuthority signalsBacklinks, domain authorityThird-party mentions, entity clarity, earned citationsSuccess metricRankings, CTR, organic trafficVisibility Score, Citation Share, Share of VoiceTechnical requirementsCrawlability, speed, metadataAll of the above, plus structured data and answer-first formattingZero-click riskModerate (featured snippets)High: 93% of AI sessions end without a site visit

The implication for strategy is that these two disciplines reinforce each other rather than replacing one another. Google has stated explicitly that succeeding in AI-powered search does not require special tricks. High-quality content, crawlability, structured data, and user-first design remain foundational. AI optimization extends those foundations into the synthesis layer. It does not rebuild from scratch.

The behavioral shift from link-based search to answer-based search is no longer a trend to monitor. It is an operating condition.

More than 25% of all Google searches now trigger an AI Overview. ChatGPT processes over 800 million queries weekly. Perplexity processed 780 million queries in May 2025 alone, a 239% increase from August 2024. AI queries average 23 words, compared to 4 words for traditional searches, which means buyers are asking detailed, specific questions and expecting direct, synthesized answers in return.

The commercial stakes are real. Roughly 80% of consumers who use AI for shopping rely on it for at least half of their purchase decisions. Buyers are forming opinions before they ever visit a website. If your brand is absent from AI-generated answers in your category, you are being filtered out of consideration before the buyer journey has even formally started.

Industry analysis on the shift in marketing investment frames this as a strategic reallocation, not a tactical adjustment. The competitive battleground has moved upstream, from the click to the answer itself. Teams that continue optimizing only for rankings are effectively competing for second-stage visibility, assuming a buyer will go looking on their own after the AI has already shaped their initial understanding.

The first-mover window is real. Only 16% of brands currently track AI search performance in any systematic way. That gap exists now. It will not exist in two years.

Most content about AI optimization focuses on tactics. Few explain the mechanism. Understanding why certain content gets retrieved and cited, while equally well-written content does not, is what separates strategies that hold up from those that produce short-term gains and fade.

AI search platforms use a process called Retrieval-Augmented Generation, or RAG. When a user submits a query, the model does not generate an answer purely from its training data. It retrieves relevant content from its index, extracts key passages, and generates a response by synthesizing those passages. The retrieval step is where visibility is won or lost.

Here is the analogy that makes this mechanism click for practitioners who already understand content strategy: think about how a skilled researcher prepares a briefing document for an executive. They do not read every relevant report cover to cover and synthesize everything. They skim for the sources that answer the specific question most directly and cleanly. A source that buries its answer halfway through a long narrative gets passed over in favor of one that leads with the conclusion. An AI retrieval system makes the same kind of triage judgment, at scale, in milliseconds.

What that triage favors: content that answers the specific question immediately, structured text with clear headings that map to real queries, entities that are defined and consistent with how they appear across other credible sources, and domains that have accumulated third-party credibility signals beyond their own website. What it skips: dense narrative prose, promotional language dressed as informational content, pages with no structural signal about what question they are answering, and content that exists primarily to hit a keyword.

Search Engine Land's step-by-step guide to optimizing content for AI search outlines the mechanics clearly: answer-first formatting, descriptive headings, precise entity definitions, and logical flow are the structural elements that AI systems use to identify and extract usable content. These are not design choices. They are retrieval requirements.

The most consistently underused tactic in AI optimization is answer-first formatting at the section level. Most content is written like an essay: it builds context, explains the background, and arrives at the point after several paragraphs. That structure works well for human readers who follow along. It does not work well for AI systems that are extracting discrete passages to answer specific queries.

The practice is simple in theory and requires real discipline in execution. Every section heading that maps to a real search query should be a question. The first 40 to 60 words of that section should directly answer that question. The rest of the section can provide context, examples, and depth. The point is that the answer has to come first, not after the windup.

This structure does two things simultaneously. It makes content more likely to be extracted by AI retrieval systems that are looking for clear, passage-level answers. It also improves the experience for human readers, who increasingly scan content the same way AI systems parse it: looking for the answer before deciding whether to read further. These two goals are the same goal. Writing for AI extractability and writing for human clarity are not in tension. They both require getting to the point.

The practical test: read the first paragraph of each section and ask whether it could stand alone as a useful, accurate answer to the question in the heading. If it cannot, the section needs to be restructured, not expanded.

One of the most common mistakes content teams make when they pivot to AI optimization is treating it as a new channel to promote the product through. The instinct is understandable: more visibility, more mentions, more top-of-funnel reach. But AI systems are information-first. They are designed to retrieve and surface accurate, useful answers. When content reads like a product pitch, it fails the credibility test at the retrieval level.

The correct sequencing is authority first, conversion second. Build a body of informational content that genuinely answers the questions buyers in your category are asking, without inserting product mentions at every turn. Prioritize accuracy, depth, and completeness over promotional language. Let the information carry the credibility. This is not a sacrifice of commercial intent. It is the prerequisite for it.

Once your domain has established retrieval authority through consistent, high-quality informational content, the dynamic changes. AI systems have learned to associate your domain with reliable information. Product-forward content published from a credible, established domain gets treated differently than the same content published from a domain with no informational track record. The sequencing is the strategy. Brands that skip the authority-building phase and go straight to product content are trying to convert an audience that AI systems have not yet decided to send them.

Schema markup is one of the most consistently underleveraged technical practices in AI optimization. Most content teams treat it as a publishing checklist item: add FAQPage schema, mark it done, move on. That understates what it actually does at the retrieval level.

Properly implemented schema markup tells AI crawler systems exactly what type of content they are reading, what question it answers, and what entities it defines. FAQPage schema, in particular, functions as a list of pre-formatted answer candidates. When an AI system is trying to answer a specific question, it is explicitly looking for content marked as a question-and-answer pair. A well-structured FAQ with proper schema is not just easy to read. It is structured in the exact format the retrieval step is looking for.

The same logic applies to HowTo, Article, and DefinedTerm schemas. Each one provides a machine-readable signal about the content's purpose, which increases accurate retrieval and reduces the probability of a model misinterpreting what a page is actually about. Search Engine Land's full GEO guide reinforces that structured data remains a foundational signal for AI search, not a legacy SEO practice. The underlying principle is the same: crawlability is the infrastructure that everything else depends on. Content strategy is the signal. Technical structure is what ensures the signal gets read correctly.

Fully automated content pipelines are one of the most significant risks in AI optimization right now, and the risk is compounding as more teams adopt AI-assisted publishing at scale. This is not an argument against using AI tools in the content process. It is an argument against a specific pattern: publishing content at high volume where the primary goal is prompt coverage rather than genuine utility.

AI retrieval systems are increasingly effective at identifying content that exists to satisfy query patterns rather than to answer real questions. The tells are recognizable: paragraphs that read like keyword clusters stitched together, no coherent argument across the piece, no original perspective or analysis, and no human editorial judgment applied anywhere in the process. This type of content gets deprioritized in retrieval, not immediately, but systematically over time.

The compounding risk is significant. A domain associated with low-quality, query-stuffed content builds a retrieval reputation that affects every page it publishes, including the genuinely good ones. The deficit is difficult to reverse once it is established. The discipline required here runs against the grain of teams under pressure to produce more content faster. The correct answer is to publish less and publish better. A smaller body of high-quality informational content builds stronger retrieval authority than a large volume of content that exists primarily to capture prompt patterns.

The practices above are not a checklist to complete in parallel. They work as a sequence, and the order matters.

Start with a content audit. Identify your existing pages that already map to real conversational queries and evaluate whether they are structured for answer extraction. For each section that maps to a real question, check whether the first paragraph answers it directly. Pages that do not pass this test should be restructured before you build anything new on top of them.

Next, apply schema markup to all FAQ content, how-to sections, and definitional content. This is the technical layer that makes your answer-block structure legible to retrieval systems. It takes less than a day per article to implement correctly, and the impact on retrievability is immediate.

From there, audit your content mix. What percentage of your published content is genuinely informational versus product-forward? If the mix skews commercial, that is the authority deficit that needs to be addressed first. Build out the informational layer before trying to increase product-centric visibility.

Finally, establish a monthly prompt testing cadence. Select 15 to 25 queries that represent how your buyers research in your category. Run them across ChatGPT, Perplexity, and Google AI Overviews. Log which brands appear, what sources get cited, and where your content is absent. This is your baseline. Without it, you are making changes without a way to evaluate whether they are working.

Tracking AI search performance requires a different set of metrics than traditional SEO. Rankings and organic traffic measure performance in the traditional SERP. They do not capture what is happening inside AI-generated answers.

MetricWhat It MeasuresWhy It MattersVisibility Score% of relevant prompts where your brand is mentioned across target AI platformsCore AI presence indicator. Answers whether AI systems associate your brand with your category.Citation Share% of AI citations that reference your domain as a sourceMeasures retrieval authority. High citation share means AI treats your content as a credible source.Share of VoiceYour brand's share of total mentions relative to named competitors in AI answersCompetitive positioning. Not just whether you appear, but how prominently relative to alternatives.Source Mention RateHow often your brand appears across third-party sources that AI platforms citeEcosystem health. A low rate signals over-reliance on owned content and a weak off-site presence.SentimentWhether AI describes your brand positively, negatively, or neutrally when it appearsBrand risk indicator. Visibility without positive framing does not drive buyer consideration.Positioning AccuracyWhether AI descriptions align with your intended category positioning and messagingStrategy signal. Misaligned AI descriptions create wrong-fit traffic and confuse buyers.

Visibility Score and Citation Share are your primary performance indicators. Track them monthly. Share of Voice tells you whether you are gaining or losing ground relative to competitors over time. Sentiment and Positioning Accuracy are the brand health layer: they tell you whether the visibility you are earning is working in your favor or creating noise.

Only 16% of brands currently track these signals in any systematic way. Establishing this measurement infrastructure now, before the space gets crowded, is itself a competitive advantage.

AI search is not a separate discipline from SEO. It is its next layer, built on the same foundations of technical health, content quality, and earned authority but extending into a new surface where synthesized answers, not ranked links, are what buyers encounter first.

The practices that drive AI visibility are not complicated. Answer questions directly. Structure content for extraction. Apply schema markup. Build informational authority before pushing conversion. Avoid manufacturing content purely for prompt coverage. Measure your Visibility Score and Citation Share monthly.

What makes the opportunity real right now is how few teams are doing this systematically. The competitive window is open. The brands building retrieval authority today are establishing the citation patterns that AI systems will carry forward for years. That advantage compounds, just as domain authority compounded in the early days of SEO. The teams that understand the mechanism and act on it now will be the ones their competitors are trying to reverse-engineer in 2028.

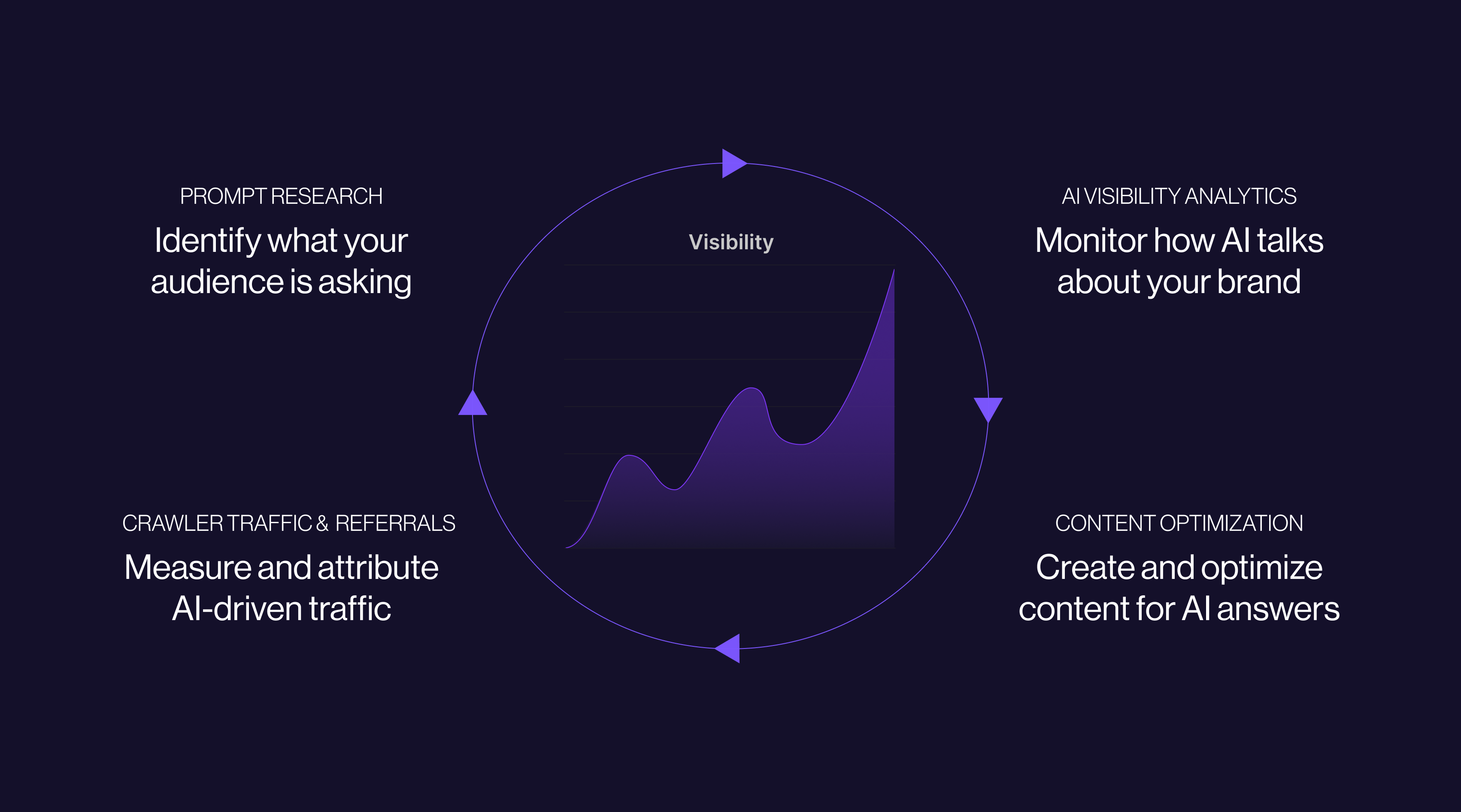

Want to see where your brand stands in AI search today? Cognizo tracks your Visibility Score, Citation Share, and Share of Voice across the AI platforms your buyers use, so you always know exactly where you appear, what AI says about you, and where competitors are pulling ahead.

SEO optimizes pages to rank in a traditional search results list. AI search optimization structures content so that AI systems can retrieve, extract, and cite it when generating synthesized answers. The two practices build on the same technical foundations, including crawlability, structured data, and content quality, but AI optimization adds answer-first formatting, entity clarity, and passage-level extractability as requirements. They are additive, not competing. Strong SEO remains the prerequisite: 99% of AI Overview citations come from pages already ranking in the organic top 10.

Structure your content so that the first paragraph of each section directly answers the question implied by the heading. Apply FAQPage and HowTo schema markup to all relevant content. Define entities clearly on first use. Build a body of genuinely informational content before pushing product-forward messaging. Earn third-party mentions in credible publications and indexed community platforms. AI systems prioritize content that is clear, credible, and easy to extract. The brands that get cited consistently are the ones that have built retrieval authority across both their owned content and the wider ecosystem of sources AI draws from.

Yes. Schema markup tells AI crawler systems exactly what type of content they are reading, what questions it answers, and what entities it defines. FAQPage schema in particular functions as a pre-formatted list of answer candidates for AI retrieval. Properly marked-up content is more likely to be retrieved accurately and less likely to be misinterpreted. Schema is one of the few technical practices where the implementation effort is low and the retrieval impact is immediate.

Content freshness is an active retrieval signal. According to Semrush's AI Visibility Index, 40 to 60% of cited sources in AI-generated responses rotate month over month. Pages that go more than a quarter without meaningful updates are significantly more likely to lose citations. The minimum effective cadence is a quarterly review and refresh of your highest-priority informational pages, with more frequent updates for content covering fast-moving topics.

Using AI tools in the content process is not the problem. Publishing at high volume with content that exists primarily to cover prompt patterns rather than genuinely answer questions is the problem. AI retrieval systems identify content that lacks coherent argument, original perspective, and editorial judgment. Domains associated with this type of content accumulate a retrieval reputation that is difficult to reverse. Use AI tools to assist the writing process. Do not use them to replace the judgment about what is worth writing and why.

Build a set of 15 to 25 conversational queries that map to how buyers in your category actually research before making a decision. These should be full questions, not keyword strings: "What is the best tool for X?" or "How does Y compare to Z?" Run them monthly across ChatGPT, Perplexity, and Google AI Overviews. Log which brands appear, which sources get cited, and where your domain is absent. Month-over-month changes in this data tell you more about your AI optimization program than any single ranking report.